In six of the seven facilities of the Molecular Foundry, scientists at benches or instruments, in lab coats or clean room suits, are hard at work creating and characterizing nanoscale materials. Sandwiched in between those levels of laboratories, however, is a different kind of lab — one within a more traditional workspace of offices and cubicles.

“Our computing is our lab space,” said David Prendergast, director of the Berkeley Lab’s Theory of Nanostructured Materials Facility located on the third floor of Foundry.

The Molecular Foundry is one of five national user facilities for nanoscale science research around the country that provides state-of-the-art instruments and expertise to users from all over the world. These centers bring together people of various specialties to work and interact together in this multidisciplinary field. Physicists, chemists, materials scientists and biologists; scientists and engineers; university, national lab and industrial groups are all served by the Molecular Foundry.

“The Theory Facility’s role within all of that infrastructure is to try to offer one of three things that we feel are necessary to do good science: You should be able to make things that you want to make. You should be able to see that you have made what you wanted to make. Then our role is to provide understanding that connects what was made with what it does – its particular function,” said Prendergast.

“Nanoscale” refers to particles and processes at a certain size. The field spans a broad range of materials and phenomena, from individual atoms at the smaller end, to length scales just below the size of living cells at the larger end. A nanometer is one-billionth of a meter, so a human hair is approximately 10,000 to 100,000 nanometers wide. One gold atom has a diameter of about a third of a nanometer. Typical cells have sizes larger than 1000 nanometers.

Nanoscience includes entities such as atoms, molecules, polymers (for example, plastics), and proteins. It also covers the influence of environments on the behavior of these objects, such as the water around a protein, and tries to understand how their function might change depending on where they are: exposed on the surface of a material or embedded within a computer chip, for example. For applications such as building more efficient electrical energy storage in batteries or creating better fuels, all the processes that occur in these devices on a fundamental level happen at the nanoscale.

The Molecular Foundry’s Theory Facility works to develop models of nanoscale materials or phenomena that can be tested using computational experiments run on computers. If the model is a good enough simulation of reality, then these computational experiments can sometimes serve as less labor-intensive and more detailed studies than actual experiments. However, the scale of nanoscale problems is large enough to easily exceed what we can compute with our personal laptops.

“Much like an chemist needs a lab, with benches, glassware, solvents, goggles and lab coats, we need computing infrastructure. We need lots of fast processors. We need to connect them with high-speed networks. We need efficient compilers and libraries to make the most of that hardware,” said Prendergast. “We need to be able write and use software that simulates nature. We need to be able to code, we need to be able to script complex tasks and define workflows. We need all of this to be able to run calculations within a supercomputing environment.”

Modeling molecules in nature

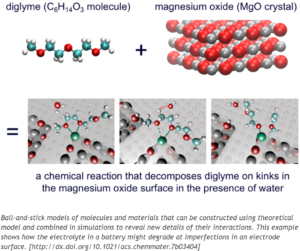

One kind of model the Theory Facility produces is the ball-and-stick model of molecules or crystals. The researchers build these on a computer to simulate their structural and electronic properties, as well as chemical reactions that happen between them. If there was a physical beaker of the substance, the atoms would also be moving or vibrating; this motion reflects the temperature of a substance. So the theorists also have to model how that motion affects properties such as reaction rates. Prendergast likens running simulations to imagining how nature evolves in time at the atomic scale, then hitting start on a stopwatch and observing the possible outcomes.

Scientists may want to see how a certain molecule, represented as a formula with letters and numbers, actually realizes itself in 3D space. They could enter the written molecular formula into one of the computer programs used by the Theory Facility and calculate what structure it will adopt, including how that might change with the temperature or pH of the surrounding environment. Another point of interest might be the object’s electronic properties, or what the electrons are doing on top of the atomic structure. If you shine light on that object, will it absorb that light? Will it emit light in different colors, or induce a reaction?

“There’s an adage that a friend of mine adopts. It’s ‘ABC’: Always be computing,” said Prendergast. “Those are your experiments that should always be going on in the background. You have an idea, you quickly try to convert that into a simulation and have it running while you go off and think about other things. The computing can be ‘running the experiment.’ You want to get into that mode where you’re constantly testing ideas because a good fraction of the time, some results you get will be surprising and they’ll teach you new things about the system you are studying.”

A diverse portfolio of computing

The complexity of modeling at the nanoscale requires a significant amount of computing to simulate reality. In order to “always be computing,” the Theory Facility leverages a diverse portfolio of computing resources. They have their own clusters — tightly connected computers that work together by communicating results back and forth at high speed. These fully-owned resources are used to develop new ideas quickly. Berkeley Lab’s institutional Lawrencium cluster is also used to supplement those resources. And the National Energy Research Scientific Computing Center (NERSC) – a large national supercomputing facility run for the U.S. Department of Energy by Berkeley Lab that serves thousands of users – is used for very large “production” runs.

On smaller resources such as their own clusters, the Theory Facility researchers can do the original testing to figure out parameters that may be initially unclear, such as how a calculation should run, how long it should run for, or what size it needs to be. The group can use their own resources as much as they want and often receive instantaneous feedback. When their calculations and code are ready, they can then move on to NERSC for production-based computing.

“NERSC is really good for very large and well-behaved calculations. But to get there, there’s a lot of trying to learn about how to run the right simulation for the right system and writing the right software that would allow you to do that well,” said Prendergast.

The Lab’s Scientific Computing Group (SCG) builds and maintains the Theory Facility’s computing clusters. The SCG also manages Lawrencium, an institutional high-performance computing cluster that Lab groups, like the Theory Facility, can invest in through their Condo Cluster program, whereby PIs can purchase and contribute hardware. In this service model, Theory Facility researchers can use their own share as much as they want, but when those resources are idle, other researchers can use them in order to make the best use of this investment.

“It helps the larger computational research community here at the Lab. We all get to benefit from these shared resources,” said Prendergast.

The Theory Facility also consults with the SCG on what computing systems to buy.

“[SCG manager] Gary Jung helps us buy new systems because his group knows the direction of the technology, the latest hardware offerings, and its current pricing. He can advise on us the best use of the dollars that we get from the taxpayer – to find the computing solution that works for us that is also cost-effective,” Prendergast said.

Simulating what X-rays see

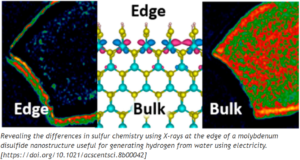

The Theory Facility often collaborates with scientists at the Advanced Light Source (ALS) to understand measurements made at its sister user facility. Some of Prendergast’s research relates to X-ray spectroscopy, a class of measurements that occur at the ALS. Similar to the way human eyes see color within the narrow visible-light portion of the electromagnetic spectrum, X-rays can be used to “see” atoms of specific elements in objects using wavelengths smaller than 10 nanometers. If scientists wanted to study a wooden table, they could tune X-ray “colors” (photon energies or wavelengths) to look only at its carbon content or only the oxygen in the object. Similarly, within those oxygen atoms, different “shades” of the same X-ray “color” reveal different oxygen chemistry. In this way, X-rays can reveal that seemingly homogeneous objects have more complex compositions.

Prendergast’s role is running computer simulations of what the X-rays are probing to convert the measurements into information about chemistry and electronic properties. Scientists planning experiments at the ALS may ask the Theory Facility what they should expect to see in order to interpret a measurement or decide whether to go ahead with the experiment.

“That’s a very useful validatory tool, to go from a measurement on one object with a guess about what it might be to some model of the same thing. If we find a match between what we can simulate for the measurement using the model and what is actually measured, then maybe the initial guess is correct. If it doesn’t quite match, our intuition can be revised and we learn something new,” he explained.

To streamline this workflow for X-ray scientists (at the ALS and elsewhere), Prendergast is working with the SCG to automate the simulation process. A graphical user interface would take a molecular structure as input, build the molecule, run the simulation, and then produce a simulated measurement for comparison with experiment.

“You can almost treat your simulation like a microscopic version of reality that can teach you more about how materials or molecules behave, beyond your initial hypothesis,” said Prendergast. “That should form kind of a loop where: I have this guess; I do the simulation; I see if it matches my guess; If it doesn’t, I’ve learned something… maybe. I adjust my hypothesis and then go back and repeat the process.”